Nvidia is rapidly expanding a powerful new business segment that could soon rival its dominant chip division—data center networking. While the company is widely known for its AI GPUs, its networking arm has quietly emerged as one of its fastest-growing and most profitable units.

The foundation for this growth was laid in 2020, when Nvidia acquired Israeli networking firm Mellanox for $7 billion. At the time, the move received relatively little attention, but it has since become a strategic cornerstone in Nvidia’s AI infrastructure ecosystem.

Today, Nvidia’s networking business plays a critical role in connecting high-performance data centers used for training and running AI models. The division generated $11 billion in revenue in the most recent quarter alone—a 267% year-over-year increase—and contributed over $31 billion in annual revenue, making it the company’s second-largest segment after compute.

This business includes technologies such as NVLink, which enables high-speed communication between GPUs; InfiniBand switches for in-network computing; Spectrum-X Ethernet platforms; and advanced optical networking solutions. Together, these technologies form the backbone of what Nvidia calls an “AI factory”—a fully integrated data center designed specifically for AI workloads.

Industry analysts note that Nvidia’s networking division is already competing with major players in the space. In fact, its quarterly networking revenue rivals the annual output of established companies in the sector, highlighting the scale and speed of its growth.

Despite its success, the segment has largely flown under the radar compared to Nvidia’s GPU and gaming businesses. Company executives suggest this may be due to how networking is traditionally perceived—as a basic utility rather than a core computing component.

However, Nvidia sees it differently. The company considers networking to be fundamental to modern computing, especially in AI environments where massive amounts of data must move efficiently between processors.

By combining its GPU leadership with a full-stack networking solution, Nvidia has created a tightly integrated ecosystem. This allows the company to deliver complete AI infrastructure rather than just individual components, giving it a competitive edge in building next-generation data centers.

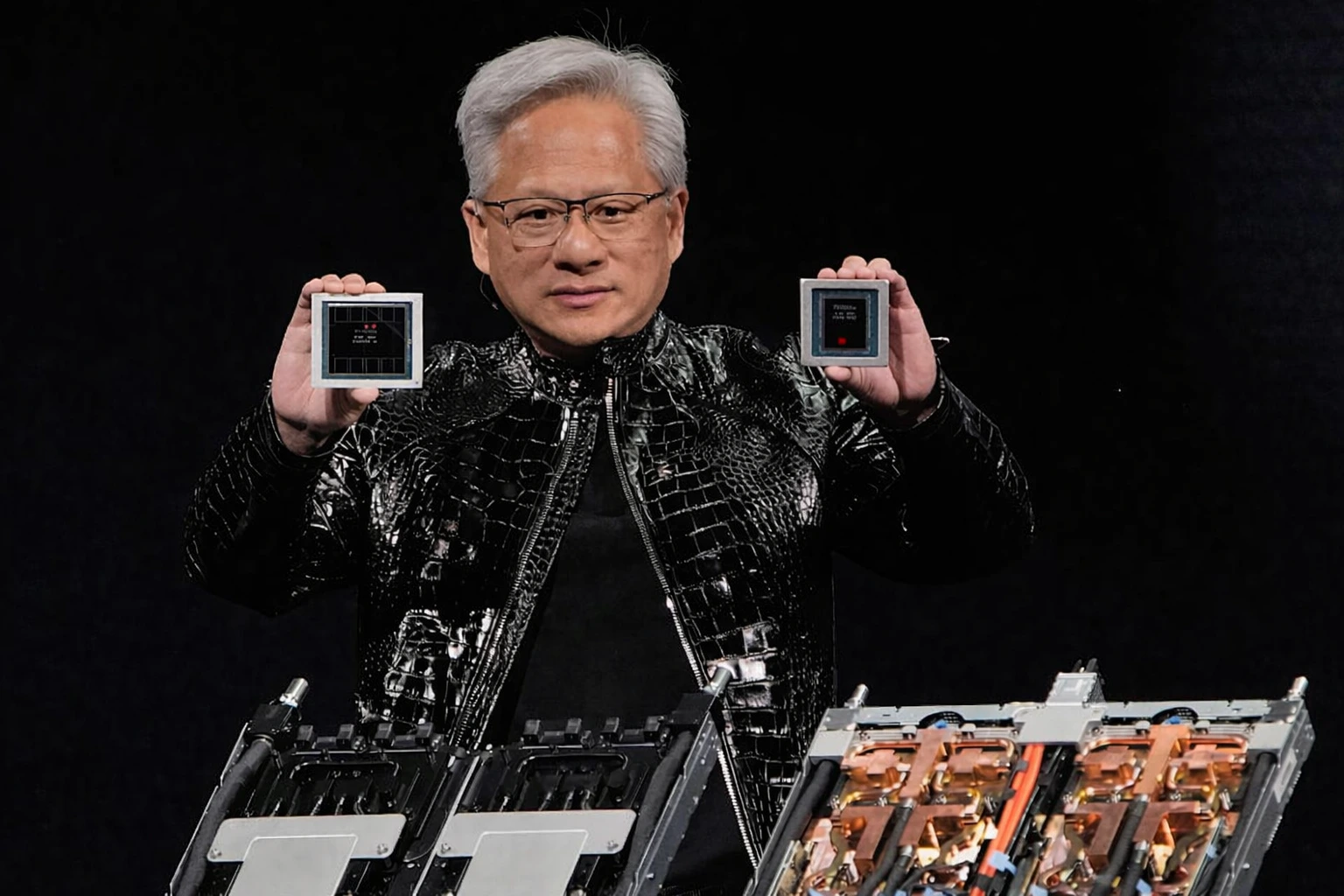

At its recent GTC conference, Nvidia unveiled several new networking advancements, including updates to its Spectrum-X platform, next-generation photonics switches, and the Rubin architecture designed for AI supercomputing.

The strategy reflects CEO Jensen Huang’s long-term vision: transforming Nvidia from a chipmaker into a full-stack AI infrastructure company. With networking now acting as the backbone of AI data centers, Nvidia’s once-overlooked division is becoming a key pillar of its future growth.